Everyone who is regularly dealing with performance issues is probably familiar with this question: at what point does being proactive turns into obsession about every harmless little anomaly? Where do you draw the line? It’s a difficult question and there’s no obvious answer, at least none that I’m aware of. I’m facing this dilemma on almost a daily basis, including this little story that I’m about to share in this post.

Continue reading “Increase in physical writes due to a plan flip”Category: Uncategorized

Log file sync and and LGWR redo wait for copy wait events

A few years ago I wrote a series of posts about various scenarios of log file sync (LFS) waits. A few days ago, I have had an issue which added yet another scenario to that already diverse collection, and I thought it would be potentially of interest for others in the Oracle community. To set the scene, it’s the same 18c (18.10 to be more accurate) 3-node X6-2 Exadata cluster where most of issues I describe in my blog occur. At a certain moment, I noticed LFS go up quite a bit, with log file parallel write (LFPW) also showing signs of increase, but a much more moderate one. I’ve got a script to graph wait event stats for multiple wait events (e.g. using a regular expression pattern for their names), below you can see a panel summarising average wait times for events containing “log file” or “redo” in their names.

Continue reading “Log file sync and and LGWR redo wait for copy wait events”Full table scan burning CPU

This post is not so much a serious investigation, rather, a few curious observations, followed by some general musings prompted by them.

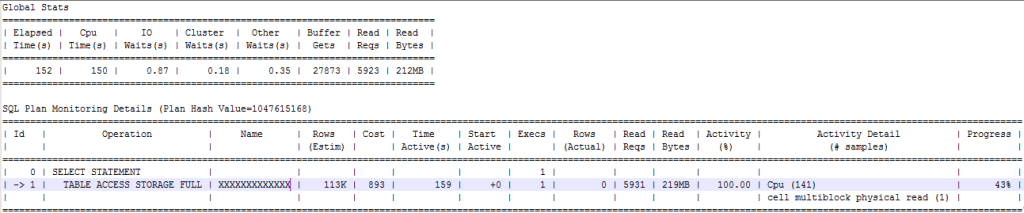

So… When you see a SQL execution plan and runtime statistics like below, what thoughts come to mind?

Performance issues around GTT in 19c

It has been (unforgivably) long since my last blog post so let me try and rehabilitate myself by posting a couple of recent interesting investigations. I’ll start with one that combines two of my favourites: stack profiling and “twittershooting” (collective troubleshooting using Twitter as a collaboration tool). The database in question is a 3-node RAC cluster, Oracle version 18.11 being upgraded to 19.10. The problem was detected during the pre-upgrade testing with “compatible” parameter set to 18 (that part was essential for the backout plan in case of major post-upgrade complications). I mention this because for whatever reason, the problem only showed up when the parameter was set.

Continue reading “Performance issues around GTT in 19c”CloudSQL to BigQuery Dataflow Pipeline in GCP

Introduction

Moving data between Cloud SQL and BigQuery is fairly straightforward with federated queries. However, federated queries are not available for Cloud SQL instances created with a private IP address, which might be the only option in many organisation due to security constraints. As an alternative, a Dataflow pipeline can be built to do the job. Moreover, there is a template readily available (JDBC to BigQuery) which in an ideal world would have made this approach easy as well. However, there are some bits and pieces which can be not quite obvious. At least, they weren’t for me — I had to spend a few days building a working pipeline and in the end I had to ask a GCP expert for help. In this blog article I’m trying to address these issues to make life easier for other people facing the same challenge. In my example I’m using Postgres Cloud SQL although I would expect the Mysql case to be very similar if not identical.

Continue reading “CloudSQL to BigQuery Dataflow Pipeline in GCP”Performance monitoring and anomalies

Tech bloggers (myself included) tend to like writing about big issues, with massive impact, company’s reputation (or in extreme cases even very existence) at stake, etc. This adds dramatism to the story (and as an added bonus, helps us feel more important). I think it can also lead to a certain bias, making the IT community believe that only major issues matter. Whenever you spot a relatively harmless anomaly and try to find out more about it on a DBA forum, “is there an actual problem you’re trying to solve” is a very likely response. And if you honestly answer “not really”, you can expect a lecture on “obsessive tuning disorder” and a suggestion to stick to a simple principle, “ain’t broken don’t fix it”. I think this mentality was initially a sound reaction to some inquisitive minds trying to solve non-issues and occasionally creating issues out of nothing instead. When taking too far, however, this attitude becomes dangerous. Anomalies are important even without an immediate impact. Sometimes they are important even without any impact at all. In this post, I’d like to illustrate it with an example.

Continue reading “Performance monitoring and anomalies”Memory fragmentation via inode cache growth

In my last blog post I covered some details of our recent battle with memory fragmentation problems on an OL6 server (Exadata compute node). It was mostly focused around page cache growth which was the main scenario. However, in addition to that, there was also a secondary scenario that had a completely different mechanism, and I will describe it in this post.

Continue reading “Memory fragmentation via inode cache growth”Memory fragmentation via buffered file I/O

Last year I’ve spent quite some time tackling various memory fragmentation issues on an Exadata cluster (I’ve described some of my experiences here and here). In the end, everything was resolved, and the symptoms went away, but only to come back in a different form a few months later. First we had a minor episode months ago — some applications experienced timeouts when trying to connect, and there was a massive spike in Load Average metric with hundreds of processes in the “D” state stuck on rtnetlink_rcv and rtnl_lock. Then everything became stable again, until a few weeks ago, when the same symptoms came back, but the impact became much more severe, with node evictions, reboots and failovers causing serious disruption for the application.

Continue reading “Memory fragmentation via buffered file I/O”Installing R shiny server on OCI compute instance (always free tier)

Introduction

Oracle Compute Infrastructure (OCI) is the next generation cloud platform run by Oracle. Like some of its competitors, Oracle offers an always free tier where you can access a range of cloud products and services without having to pay anything ever. And while you will need to provide credit card information to prove that you are not a bot, it will not going to be charged unless you explicitly upgrade to a paid account.

Continue reading “Installing R shiny server on OCI compute instance (always free tier)”